|

select ( "location", "date", "people_fully_vaccinated_percentage" ). table ( "my_iceberg_catalog.db.vaccinations" ). I can query table by omitting location and still have a fast read query, Iceberg will only select necessary partitions in locations that have this date: In my vaccinations table data I have partitioned data by location and by date: location=Brazil/date=/*.parquet Thanks to separation between physical and logical layout we don’t need to filter data according to how it is partitioned to prevent full data scans and slow queries. show () +-+-+-+-+-+-+-+-+ | location | date | vaccine | source_url | total_vaccinations | people_vaccinated | people_fully_vaccinated | total_boosters | +-+-+-+-+-+-+-+-+ | Belgium | 2020 - 12 - 28 | Pfizer / BioNTech | https :// epistat. table ( "my_iceberg_catalog.db.vaccinations" ) vaccinations. In the end table evolution is a sequence of all manifests added since its creation: Iceberg will use these manifests to represent table after each modification known as a snapshot.

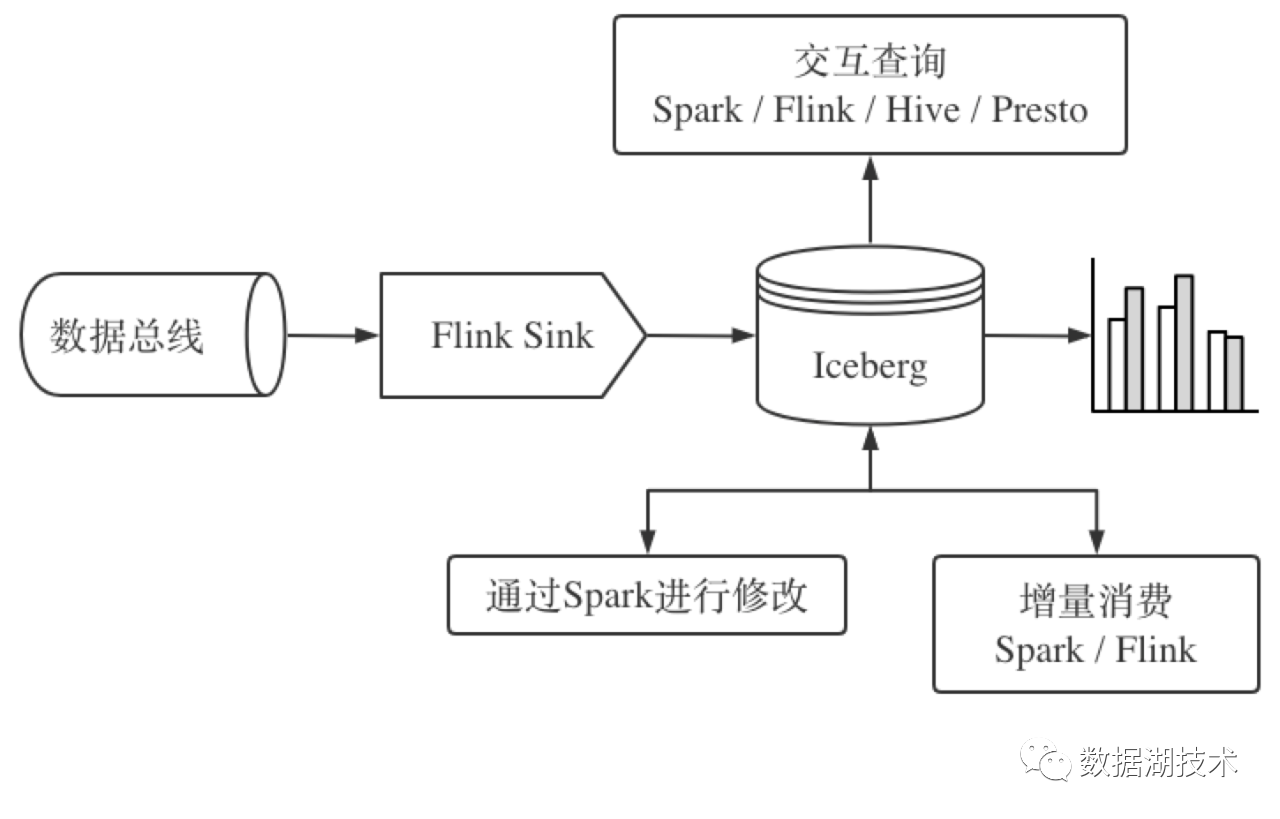

org.scala-lang scala-library $ data under data/ directory. I declare versions of Scala, Spark, Iceberg, AWS-SDK and PostgreSQL connector in my Maven pom.xml properties: Iceberg has integrations with other engines such as Apache Flink, Trino (Presto) or Dremio. I am familiar with it and it is now the most advanced integration with Iceberg format according to documentation. I am going to use Spark framework with Scala for this test. I start a server on default port 5432 and I create a database iceberg_db. In my test I use a PostgreSQL database for that purpose. To ensure data consistency in case of concurrent reads and writes, Iceberg tracks data and tables using a database. This is where data written with Iceberg will be stored. Then I create a bucket warehouse on MinIO console available at. $ minio server ~/Downloads/minio-data -console-address :9001Īutomatically configured API requests per node based on available memory on the system: 155įinished loading IAM sub-system (took 0.0s of 0.0s to load data). I setup a local S3-like object storage with MinIO on my local machine:

I am going to use a subset a Covid 19 vaccination data provided by Our World in Data at this address. Efficient reads that only read needed partitionsĮstimated reading time: 10 minutes Table of contents.

Schema evolution without needs to rewrite entire dataset (new column, column rename, etc.).issues when writing Parquet on S3 with Spark I have described in S3A committers article) Atomic, consistent and fast updates (e.g.This new level of abstraction aims to solve common issues encountered when working with Parquet format: It brings a new level of abstraction on Parquet format which has now become industry standard for storing datasets in data lakes. It has been a top level project of the Apache Foundation since 2021 and it has reached version 1.0.0 in November 2022. It was developed at Netflix and was then incubated at the Apache Foundation. Apache Iceberg is a new table format for storing large and slow moving tabular data on cloud data lakes like S3 or Cloud Storage.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed